Antonia Landi: “AI is just another tool. Start treating it like one.”

Believe it or not, I’ve been giving talks about irresponsible tooling since 2023.

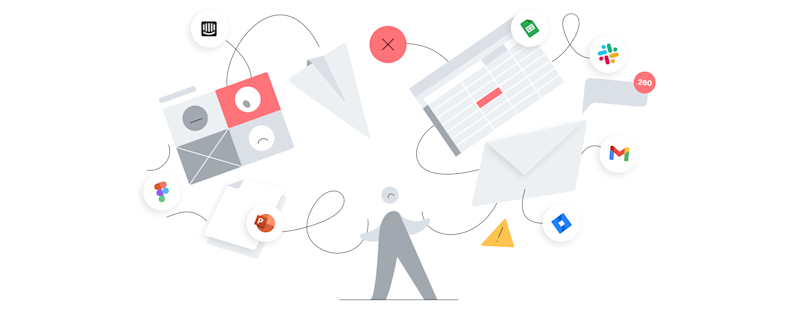

Back then, the culprit was undisciplined adoption – teams drowning in subscriptions to tools that each solved slightly different problems, none of which were properly integrated, and all of which quietly compounded into a tool stack nobody could ever fully map or maintain.

Getting organizations to pare this back was hard, slow, unglamorous work. The business case was certainly there – licensing costs had exploded, onboarding became unmanageable, and the hidden tax of maintaining dozens of integrations and fighting stale information was an undercurrent that slowly became palpable to everyone. But still, it never felt urgent enough to prioritize until something actually broke.

Slowly, we made progress. Organizations learned to be more disciplined with what they stuck their time, money, and effort into. There were fewer tools, better connections, more deliberate choices. It wasn't fancy or fun, but it worked.

Then AI arrived, and apparently we forgot everything we learned.

can support your team.

Book a demo

A bad kind of déjà vu

In the last 12 months I've spoken to leaders, coached practitioners, and watched from the sidelines as organizations across industries have bought AI tool licenses at a pace I haven't seen in years. The enthusiasm is real and, in many ways, understandable. These tools are impressive. Some of them can genuinely be transformative and the competitive pressure to "be doing AI" is not imaginary.

But here's what I have not heard once, not from a single person: a clear account of how their organization determined that a particular AI tool was the right solution to a specific, validated problem. I haven’t heard how it integrates with the tools they already have. Nor who owns it, maintains it, and is accountable for whether it delivers. Nor what success looks like, and how they'll know when it isn't working.

What I hear instead is: "We've rolled out [tool] across the product team." That’s it.

As someone who’s made it their business to help companies figure out their tool stack, let me tell you: That's not an adequate tooling strategy. And in my experience, a mismanaged tool stack has a way of becoming a very expensive problem.

The debt nobody’s tracking

Tool-stack debt is real, it compounds, and it almost never appears on a balance sheet until it's too late.

Lately, it looks like this: A team adopts an AI writing assistant because one PM loved it and expensed it. Then another team adopts a different one, because they preferred its UX. Then someone in research buys a third because it has a specific synthesis feature the others lack. None of these tools talk to each other. None of them are integrated into existing workflows in any meaningful way. Nobody owns the admin. The licenses renew automatically.

And gradually, what started as three enthusiastic individuals trying to work smarter has become a fragmented, unmaintained, ungoverned layer sitting on top of your existing operating system - interacting with it in ways nobody fully understands, and nobody designed.

Now multiply that by every function that's currently "experimenting with AI."

The real cost of this isn't the licensing fees (though those add up) - it's the complexity. It's the cognitive load of teams switching between tools that were never designed to work together. It's the data that lives in five places instead of one. It's the institutional knowledge that gets trapped inside a tool that half the team doesn't even have access to. It's the three months you'll eventually spend trying to untangle which tool is the source of truth for what.

But most of all, it's the opportunity cost. Because when operating systems are fragmented, organizations cannot move at the speed they’re capable of. It’s scary to think that AI adoption can actually make you slower, not faster. And that’s the problem right there, because right now, the organizations that can move fast and move coherently are the ones that are winning.

Old problems, accelerated

The irony is that product organizations have known this for years. One of the most consistent themes in how mature product teams operate is the principle that a leaner, better-integrated tool stack consistently outperforms a sprawling one. Not because fewer tools is cheaper, but because integration, coherence, and shared context are what actually allow teams to move quickly and make good decisions.

The best-run teams I know don't have the most tools. They have the right tools, deliberately chosen, properly embedded, and regularly audited.

What AI has done is create pressure to abandon this level of discipline at exactly the moment it matters most. It’s because AI tools feel different – more powerful, more novel, more existentially urgent – and it feels like the normal rules shouldn't apply. “We need to move fast, now! Can’t we just figure out governance later?”

But "figure out governance later" is exactly how tool-stack debt gets created in the first place.

AI is a solution hypothesis

A new tool – any new tool, including AI – is one of many possible solutions to a given problem. It is a hypothesis, not a foregone conclusion. Like all hypotheses, it needs to be tested against the actual problem before you commit to it at scale.

That means starting with the problem, not the tool. What specific capability does your organization lack? Where is the measurable bottleneck? What would change concretely, measurably, if this worked? These are not bureaucratic questions. They are the difference between a tool that genuinely transforms how your team works and one that gets used enthusiastically for three weeks before fading into the background of your monthly OPEX.

It also means doing the unglamorous work that comes after the purchase decision. Deciding who will own this tool? Who’s responsible for ensuring teams are actually using it effectively? How does it connect to your existing stack, not in theory, but in practice? What are you replacing, and what happens to the institutional knowledge that currently lives in the thing you're replacing?

None of this is anti-AI. I use AI tools. I recommend AI tools. Some of them are genuinely remarkable. But remarkable capability and organizational fit are two entirely different things, and conflating them is how organizations end up with lots of bills and nothing to show for it.

What does responsible AI tooling actually look like?

For senior product leaders coming into the AI tool market I'd offer the following set of questions to navigate this moment. Sit with them before the next renewal lands in your inbox:

Have you validated the problem this tool is solving? Not assumed it, validated it. Do you know, specifically, where your team is losing time or making lower-quality decisions, and have you confirmed that this tool addresses that gap?

Do you have success criteria? If you can't articulate what "this is working" looks like in measurable terms, you have no basis on which to evaluate whether the investment was sound - or to make the case for continuing it.

Does this tool integrate with how work actually gets done? Not tomorrow, today. A tool that lives outside your existing operating model will be used sporadically at best, generating isolated outputs that never feed back into the system. The value of any tool is proportional to how well it connects to everything else.

Who owns it? This is the question most organizations skip, and it's a hard but necessary one to answer. Ownership means someone is accountable for adoption, maintenance, integration, and the ongoing question of whether this is still the right tool six months from now.

What are you consolidating? Every new tool you add increases the complexity of your operating system. The most disciplined organizations I work with treat every addition as an impulse to retire something else.

Above all, remember this: A coherent, well-connected system will always outperform a fragmented one.

AI done right

The organizations that will get the most out of AI are not the ones that adopt the most AI tools. They're the ones that integrate AI thoughtfully into a coherent operating system. Where insights flow between tools, context is shared rather than siloed, and the humans using those tools spend their time on judgment rather than on managing the tools themselves.

The competitive pressure to move quickly is real. But speed without coherence isn't an advantage. In fact, it’s a disservice to everything that came before it.

As leaders, you have an opportunity right now, before the debt matures, to be the ones who asked the right questions early. Who insisted on a validated problem before a purchased solution. Who built an operating system that could actually absorb new capability rather than be destabilized by it.

That's how you win the AI race.

Antonia Landi

how airfocus can support your team.

Book a demo

Read also

Explore how airfocus can support your team