Your platform metrics are telling the wrong story: Connect technical output to business impact

Most platform teams communicate success as "We improved our ticket throughput by 30%." Great platform teams communicate success as "We enabled four teams to deliver compliant AI-powered features faster, leading to a 5% increase in enterprise contracts." The first is purely on team mechanics. The second connects to business strategy. Same work. Different story.

Communicating this way is not easy. Platform teams, by definition, are at least one layer removed from end customers and therefore don't directly impact revenue. Many organizations treat them as pure cost centers. And it doesn't help that most platforms' success narratives rest solely on throughput metrics or budget optimization.

But staying within the technical cost center narrative leads to zero-sum budget conversations – and sometimes even an ongoing debate about whether the platform team needs to exist at all.

A tale of two traps

In the first article of this series. I described three anti-patterns platform teams fall into under pressure: elaborate scoring frameworks, dependency management processes, and requirement intake optimization. None of them works because they manage symptoms without addressing the root cause. They also tend to come with metrics that quietly work against the team.

Technical excellence

When I talk to platform teams about the metrics they track, they often show me Jira dashboards full of velocity statistics and throughput metrics. And more advanced teams might follow tracking frameworks such as DORA or SPACE to get a solid view of technical quality, platform robustness, and team health.

Having these metrics is great, but leading with them in communication to non-technical leadership can backfire. You present them, and everyone expects them to go up. The conversation becomes: more output, more stability, less cost.

Focus on cost

The other pattern I see is when teams are more conscious of the business's fiscal reality: They start tying development costs to a release. Every feature comes with a price tag. Some even center this cost narrative around their planning and prioritization cycles.

Cost awareness matters. But leading with it is dangerous. It invites exactly the conversation you don't want: How to cut costs. Leadership and stakeholder discussions turn into haggling. You see this when PMs spend time assigning costs to tickets, or when stakeholders focus on how many engineers should work on a given initiative. Meanwhile, the actual goal gets lost entirely.

The trap

Both measurements are important, but on their own, they are poor tools for communicating outside the team. They work within the team to optimize, but as soon as they are used externally, they invite the wrong conversation.

Instead, focus on value. If the organization spends €50k per sprint on this team but gets a €200k return, optimizing costs or speed should no longer be the discussion.

But how do you actually shift the conversation to those €200k? The platform won't generate this revenue directly. The impact is always indirect. You have to do the extra work and trace a path from what the platform provides to the business impact. From consumer action to business impact.

This consumer-action-to-business-impact reasoning leads to a different conversation entirely. Instead of communicating team metrics or ranking requests, you're making explicit what matters most to the business right now.

Connecting consumer actions with business impact

Before we head into how to create this reasoning, one short warning: I see two failure modes. First, teams that optimize purely for consumer (often developer) experience – and wonder why leadership doesn't care. Second, teams only look at the business side – and never actually solve the right problem. Both miss. Just in opposite directions.

Holding both at once is the core tension in platform product management.

Start with humans

In my last article, I went deep on differentiating stakeholders. That groundwork applies directly here: Defining good consumer goals. What are the goals of your consumers, and how can your platform help them to reach their goals?

You need to deeply understand your consumers' workflows and how your platform fits in. For example, instead of staying at an abstract “account managers get faster,” go concrete like “account managers reduce the time to get a full account overview from 10 minutes to below one minute.” Don't describe a feature or solution. Describe the specific context and improvement.

Whether your platform eases another developer's workload or empowers a compliance analyst to review complex payment flows, it does so by letting people take a specific action. Define these actions. Those are the most direct indicators to measure.

Mirror with business impact

Identifying consumer goals surfaces many opportunities to improve their work. But only by connecting those actions to business goals will you have a solid reason to pursue any given one.

To clarify business impact, look at your highest-level business drivers. I like to use a model that differentiates between 4 core KPIs: increase revenue, protect revenue, reduce costs, and protect costs. I picked this up from Joshua Arnold and John Cutler. In my experience, separating protection aspects into revenue and costs aligns typical platform expectations around governance, compliance, reliability, and other passive drivers with high-level business impact.

Sometimes just one of the four drivers is relevant, but at other times multiple are involved in the same logic.

Trace the path

The consumer action and the business impact are the two ends of the same story. But you can't just define these two and claim a connection. Platform product management's job is to find this connection based on the actual business model.

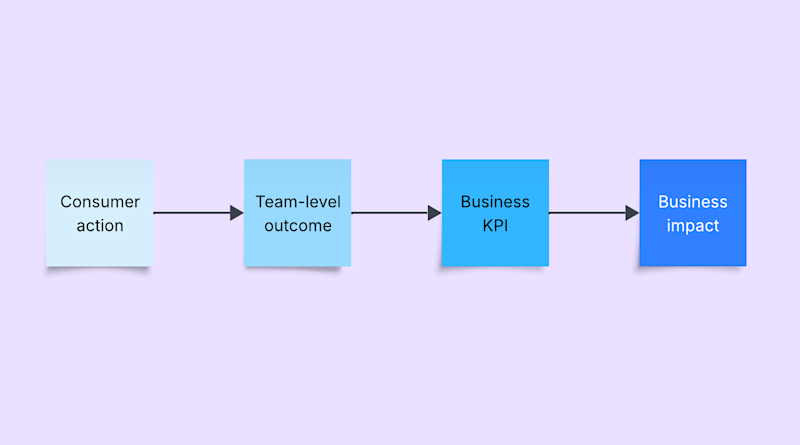

The rough model looks like this: consumer action → team-level outcome → business KPI → business impact.

There can be more levels and more detail, depending on the organization's size and structure, but the logic that connects a consumer action to business impact remains the same.

Defining the logic should not be a never-ending research project. Start with simple logic and improve with data. The goal is not to have a perfect, proven model on day one, but to start with a shared rationale.

Getting to good models takes time. Be okay with imperfect first attempts, and ask to be challenged. The key is the conversation, and learning on the way, not being right.

For the account manager who needs quicker access to a full account overview, it could look like: Time to full overview → improve account manager workflow → improve sales closure rate → increase revenue.

The consumer need is central in this model, but it doesn't always start with the consumer. As discussed in the second article, you might need to reason first from a governance perspective, then identify the behavior changes you need from consumers in a second step. And then connect to business impact.

Don't expect people to just nod along. Business logic feels threatening when teams have been rewarded for technical metrics. You're not just changing the language – you're challenging what success looks like. Always connect your new model to the current conversation. Don't expect the mindset to shift overnight.

Where are the features?

Don't consider solutions and features until the connection is clear and everyone agrees on the opportunity. Feature ideas and approaches naturally surface while you're still looking into consumer actions and business rationale. Note them down and park them for later. In my experience, you may never revisit that list, but writing things down frees up headspace to stay with the problem.

With a clear consumer context aligned with the business logic, multiple possible solutions begin to surface on their own.

Don't we just have to measure adoption?

One metric many platform teams start to track is adoption. They track how much their platform is actually used. This is a great starting point. Although optimizing for adoption alone is like optimizing for revenue, it's abstract. And this will again open the door to any random idea.

And watch out: If your platform use is mandated, adoption numbers are meaningless. Teams aren't choosing you. They have no choice.

How does this look in the real world?

Let's illustrate this with a few examples.

Unified payment platform

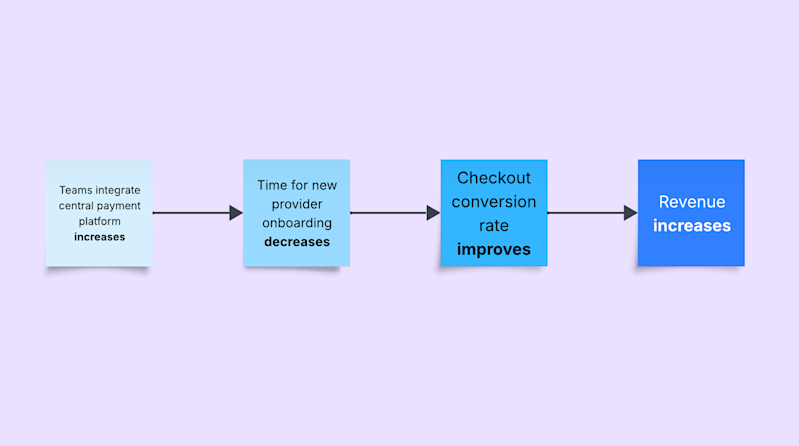

You might remember the payment platform from the first article.

A platform team discovers that multiple teams have integrated payment handling and are duplicating work through improvements and maintenance. Integrating new payment providers, in particular, takes a long time and blocks other work.

A central solution would eliminate duplicate maintenance costs and also open the door to integrating new payment providers globally. This leads to improved checkout conversion rates, increasing revenue.

Each step of this logic enables the next. The platform team must make it easy for the other teams to adopt and integrate the payment platform. This is a core piece that enables higher checkout conversion rates, which the platform team has no direct influence over. This reasoning connects adoption to a clear business logic every leader cares about.

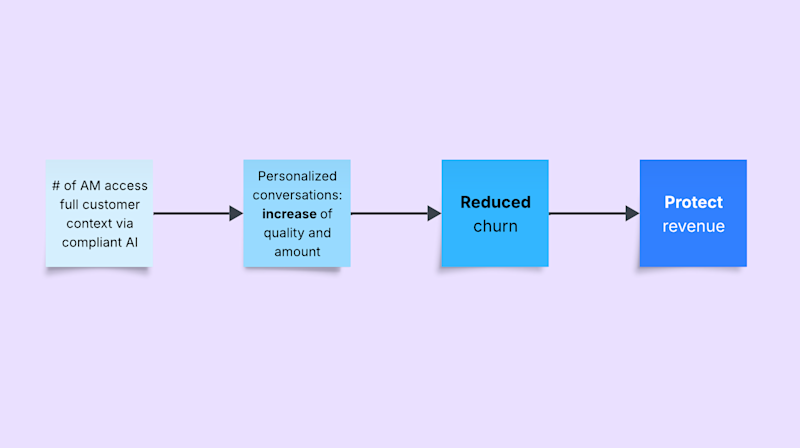

Compliant AI usage for customer data

Another example, possibly familiar: Leadership is asking every role to "use AI." Second-level support would love to save time with a summarized context on the case and customer. And account managers want insights into customer history, including support activity. But just putting customer data into an AI system doesn't comply with privacy regulations: access controls, data classification, and more are required. Either the leadership mandate remains theoretical, or teams scramble to navigate complex legal and tooling questions on their own.

A platform providing secure, internally wired AI systems can make this strategic goal real for multiple teams. These systems guarantee the right access controls and AI capabilities, not just to speed up consuming teams, but to protect revenue through compliant service and reduced churn.

The platform enables account managers to work with customer data securely and with full context. That leads to reduced churn and stronger renewal conversations, protecting revenue.

What changes when you speak this language

Budget conversations transform

Not everything clearly maps to money, and many platform capabilities enable multiple business returns. The goal is to shift from a feature- and cost-centric conversation to one about the value the platform can provide. Platform work needs to be legible for a wider audience.

Instead of constantly negotiating spend, the conversation shifts to what's worth investing in. That also paves the way for measuring success with data rather than opinions.

Support your leadership

A leader who walks into a budget discussion and says, "Our platform team supported X € in revenue growth by providing Y for Z" has a fundamentally different position than one who says, "The deployment frequency improved 300%."

Remember the influencer and sponsor perspectives from the second article. Stakeholders representing your team in non-IT circles are better equipped when your team speaks business language. They can reuse the team-level logic in their own discussions. The reasoning remains consistent across levels and naturally folds into higher-level goals.

Prioritization becomes defensible

Many prioritization scoring systems include some form of value or ROI. Often, these numbers are pure guesswork, and politics decides the rest. Most people have sat in a meeting where everyone suddenly presented a 9-out-of-10 impact score for their initiative. In contrast, having a clear business reasoning grounds prioritization in something concrete.

Moving the discussion toward what can actually be done for consumers and how it ties into business strategy goes beyond opinion. It also enables the team to do better product discovery. With a clear measurable behavior change in mind, you can test multiple ideas and see what works. De-risking ideas early stops being a hard sell. It becomes obvious to everyone around you.

Just start

The first move is simple: talk to one consumer tomorrow to understand how they use your platform, and write one sentence about what that enables for the business. Everything else follows from that.

Christoph Steinlehner

how airfocus can support your team.

Book a demo

Read also

Explore how airfocus can support your team

Explore how airfocus can support your team